Agents That Don't Wait for Alerts to Fire

How continuous agent hunting collapses the line between detection and threat hunting, and how the infrastructure today makes it possible.

Welcome to Detection at Scale, a weekly newsletter on AI-first security operations, detection engineering, and the infrastructure that makes modern SOCs work. I’m Jack, founder & CEO at Panther. If you find this valuable, please share it with your team.

Every detection program in production today runs on the same basic loop. A detection engineer studies a threat, translates it into rule logic, and deploys it to a SIEM or detection platform. That rule sits in the pipeline, evaluating events as they flow through, waiting for a match. When the conditions are met, it fires an alert. That alert lands in a queue, and someone picks it up, investigates, and makes a judgment call on whether to close it or escalate it. Then the next alert comes in, and the cycle repeats.

This model has become more sophisticated over the years. Detection-as-code brought version control, testing, and CI/CD to rule management. Behavioral signals replaced some of the noisiest atomic alerts with entity-level risk scoring. AI agents now handle the triage step faster and more consistently than most human analysts can at volume. But the fundamental structure hasn’t yet changed, and detection is still a human-authored, queue-driven process.

Coverage in a rule-based model is bounded by two things: what your detection engineers know to look for, and how much time they have to write and maintain rules for it. Threat hunting exists precisely because teams understand their rules don’t cover everything. Hunters go looking for things the rules missed, patterns that haven’t been codified, behaviors that are too nuanced or too novel for static logic. But hunting is expensive. It requires dedicated time and access to broad datasets and intelligence. Most teams run hunts infrequently, and many don’t sustain a formal hunting program at all.

The industry has spent years optimizing each step in this chain individually: better rules, faster triage, richer enrichment, smarter correlation. What it hasn’t done is question whether the chain itself is the right model when agents are capable of something fundamentally different. In this post, we’ll look at what happens when agents stop waiting for rules to fire and start hunting continuously on their own, how that changes the relationship between detection engineering and threat hunting, and why we’re still early in a transition that will reshape how SOCs generate and act on security findings.

What Changes When Agents Continuously Hunt

An agent with access to a security data lake, enrichment sources, and threat intelligence doesn’t need someone to anticipate a threat before it can look for one. It can form a hypothesis, query the data, evaluate what it finds, and surface a structured finding, all without a rule telling it what to look for.

These building blocks already exist. Agents can now query log data, pull enrichments from identity providers and asset inventories, and cross-reference threat intelligence feeds to contextualize activities. Data lakes built on formats like Iceberg make months or years of security telemetry queryable in minutes. The agent doesn’t need a detection engineer to write a rule for “unusual cross-account role assumption patterns in AWS”; it can investigate that class of behavior directly by querying CloudTrail, correlating with identity context, and assessing whether the pattern deviates from the environment’s baseline.

What makes this different from existing threat-hunting workflows is its duration and autonomy. A human-led hunt is a project: someone defines a hypothesis, allocates time, runs queries, documents findings, and moves on. It happens once, and then it’s over until the next hunt. An agent-led hunt can run perpetually. The same investigative logic that a senior analyst would apply during a focused hunt can execute on a rolling basis, scanning for patterns across log sources, adjusting based on what it finds, and surfacing results as they emerge. The concept of a “hunt” as a discrete event starts to dissolve when the hunter never stops looking. Continuous agent hunting is the practice of deploying AI agents to independently scan security telemetry on a rolling basis, forming and testing hypotheses without waiting for a pre-authored detection rule to trigger. It collapses the distinction between “detection” and “threat hunting” into a single, ongoing process.

This changes the role of detections in the overall security architecture. Rules don’t go away, but they no longer serve as the sole source of signal in the SOC. The alert queue gets populated by two streams: deterministic alerts from rules that matched known-bad patterns, and probabilistic findings from agents that noticed something worth investigating. The implication is that coverage is no longer gated solely by what your team had time to write rules for. The spaces between your rules, the gaps that today only get examined during periodic hunts, become continuously monitored territory.

From Alert Queues to Continuous Findings

In an agent-led SOC model, the alert queue still exists, but what populates it changes. Alongside the familiar rule-based alerts, agents surface findings from their own continuous analysis. The output looks similar to an alert (entities, evidence, confidence, next steps), but its origin is fundamentally different: it came from reasoning over data rather than a pattern match against a rule. These findings should be routed through the same case management or ticketing workflow as rule-based alerts.

The question is: how do you scope these hunts so agents aren’t just running open-ended queries against your entire log corpus? The answer is to give them an opinionated starting point. This is where the signals pattern becomes foundational. If you’ve already been labeling security-relevant events (authentication changes, privilege escalations, infrastructure modifications) and storing them as a filtered layer on top of your raw logs, you’ve built exactly the dataset that continuous agent hunting should operate against. These signals represent the <1% of your log volume that is security-relevant but not necessarily alert-worthy on its own. A new IAM role creation in AWS, a service account added to a privileged group, and a conditional access policy change in Entra ID: none of these individually justifies waking someone up, but all of them are the kinds of activities an attacker would generate.

The mindset shift is subtle. In the rule-based model, you ask: “What specific pattern is malicious enough to fire an alert?” In the continuous hunting model, you ask: “What category of security-relevant activity should an agent be regularly reviewing for anomalies, patterns, or context that my rules wouldn’t catch?” You’re defining scopes, not rigid signatures.

In practice, this is a set of prompts that tell an agent what to look for. The structure should mirror what you’d write for a triage agent: define the threat model, explain why this activity matters, give the agent clear criteria for distinguishing risky from benign, call out common false positives, and specify the enrichment steps and external context sources that will help it make a confident judgment.

Here’s an example hunt prompt for IAM analysis in AWS:

Scope: Review all IAM signals from the past 24 hours, including role creations, policy attachments, trust policy modifications, and access key generation.

Threat model: An attacker who has gained initial access to an AWS environment will often escalate privileges by creating new IAM roles, attaching permissive policies, or modifying trust relationships to enable cross-account movement. These changes are how an adversary establishes persistence and expands access beyond their initial foothold. IAM modifications are among the highest-value signals in a cloud environment because they directly affect who can access what.

Assessing benign vs. risky: Most IAM changes in a healthy environment are made by infrastructure-as-code pipelines (Terraform, CloudFormation, Pulumi) running through CI/CD systems with known service roles. The key question isn’t whether the change happened, but whether the identity, method, and context are consistent with the organization’s normal change patterns. A role creation by a Terraform execution role during a deployment window, correlated with a recent merge in the infrastructure repository, is almost certainly routine. A role creation by a human identity through the console, especially one that doesn’t typically make IAM changes, is worth investigating.

Common false positives: Platform engineering teams making manual IAM changes during incident remediation or environment bootstrapping. Automated security tooling (like AWS Config remediation or SCPs applied by a governance pipeline) that generates IAM signals as a side effect. Sandbox or development accounts where IAM experimentation is expected and doesn’t carry production risk.

Investigation steps: For each IAM signal, query the identity’s activity history over the past 30 days via the SIEM to establish whether IAM changes are part of their normal pattern. Check the identity provider via MCP to determine the user’s role, team, and whether their account has been flagged for any access reviews. Correlate with CI/CD activity (via GitHub or deployment platform MCP) to determine if the change aligns with a recent code merge or deployment. Look for surrounding signals within a two-hour window: new console logins, AssumeRole calls from unfamiliar source accounts, changes to CloudTrail logging configuration, or S3 bucket policy modifications that could indicate data staging.

Output: Produce a structured finding for any activity where the combination of identity, method, timing, and surrounding context suggests behavior outside the expected operational pattern. Include confidence level, supporting evidence, the enrichment sources consulted, and whether the finding warrants analyst review or can be logged as a tracked observation.

That prompt doesn’t specify a single pattern that’s definitely malicious. It defines a threat model, provides the agent with judgment criteria to distinguish routine operations from attacker behavior, and points it to the enrichment sources that enable a confident assessment. The agent might find nothing notable for three days straight and then surface a finding on day four because a contractor’s identity, one that the identity provider shows hasn’t completed its latest access review, created an IAM role with a cross-account trust policy an hour after authenticating via the console for the first time in weeks. No rule was written for that exact chain of events. The agent connected the signals because it was told what mattered, why it mattered, and how to evaluate what it found.

You can build these hunting scopes across any domain where you have structured security signals: configuration changes, identity-based access anomalies, or data movement in cloud storage. Each scope is a standing brief to an agent, and over time, they become a library of continuous hunts, each running on a cadence that matches the risk profile of the activity it monitors: IAM changes reviewed daily, data movement signals reviewed hourly, authentication policy changes reviewed in near real-time.

What Happens to Detection Engineering

Detection engineering doesn’t disappear in this model, but its center of gravity shifts. Rules become the known-good baseline: high-confidence, high-severity patterns that you want firing deterministically every time. If someone disables CloudTrail logging on a production account, you don’t need an agent to hypothesize about whether that’s interesting.

But the coverage between those high-confidence rules, the territory that today only gets examined during periodic hunts if it gets examined at all, becomes the domain of continuous agent analysis. Detection engineers spend less time trying to write rules for every conceivable variation of a behavior and more time defining the hunting scopes that agents operate against. The work shifts from authoring pattern-match logic to articulating threat models, judgment criteria, and enrichment paths that agents can execute on a rolling basis. In a sense, the prompt we walked through in the previous section is what a detection artifact starts to look like: not a rule, but a standing brief that encodes how your team thinks about a class of threat.

This doesn’t reduce the rigor of detection engineering. If anything, it raises the bar. Writing a rule that matches a specific log pattern is hard. Writing a hunting scope that teaches an agent how to distinguish attacker behavior from routine operations across multiple data sources, with clear guidance on false positives and enrichment steps, requires deeper threat modeling and a more explicit articulation of what “suspicious” actually means in your environment. The discipline gets harder, not easier. But the coverage it produces is dramatically broader.

We’re Still in the Early Innings

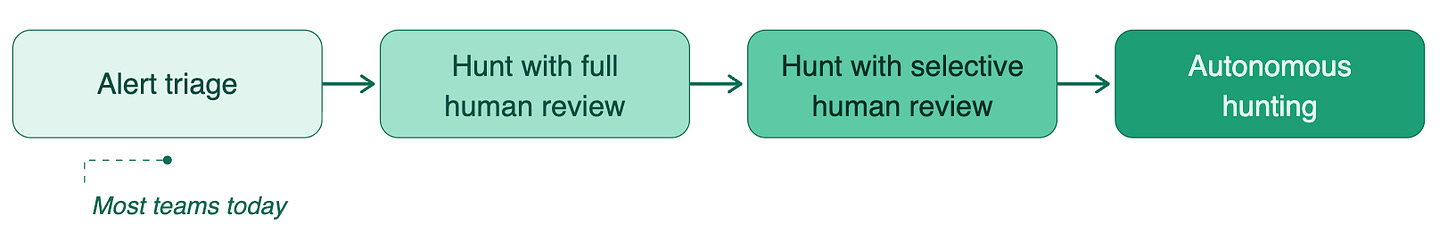

Most security teams today are still in the first phase of the agentic transition: agents triaging alerts written by humans. That’s a meaningful step, and teams doing it well are already seeing real gains in analyst productivity and response consistency. But it’s a long way from agents generating their own findings autonomously.

Continuous agent hunting only works if agents have something worth hunting through. You need a queryable data lake with enough retention to establish behavioral baselines, structured security signals that give agents an opinionated starting point rather than a raw log firehose, and MCP tooling that connects agents to enrichment sources outside the SIEM. Without those foundations, an agent hunting continuously is just burning tokens and compute against unstructured data, producing low-confidence findings that create more work than they save. The economics of this architecture are directly tied to how well you’ve curated the data underneath it.

The trust model is also immature. Most organizations aren’t ready to act on a finding that an agent surfaced independently without a human validating the reasoning. That’s a reasonable position today, and teams should build toward autonomy incrementally rather than flipping a switch.

Each step requires the agent to earn trust through transparent reasoning and consistent accuracy. Most teams are somewhere in the first or second stage, and that’s fine. The architecture described in this post is what makes the later stages possible.

But the trajectory is clear. If you’re investing in a security data lake, security-relevant signals, detections designed for agent reasoning, and connecting enrichment sources via MCP, you’re building the architecture that makes continuous agent hunting possible. The flywheel that detection engineering always promised, detect, learn, improve, repeat, finally spins at a pace that matches the threat landscape. The constraint was never the data or the models. It was the human bottleneck in the loop.

Cover photo by Oxana Melis on Unsplash